The method was the culmination of several advances that took place during the course of the eighteenth century: The accurate description of the behavior of celestial bodies was the key to enabling ships to sail in open seas, where sailors could no longer rely on land sightings for navigation. The method of least squares grew out of the fields of astronomy and geodesy, as scientists and mathematicians sought to provide solutions to the challenges of navigating the Earth's oceans during the Age of Discovery. The least-squares method was officially discovered and published by Adrien-Marie Legendre (1805), though it is usually also co-credited to Carl Friedrich Gauss (1809), who contributed significant theoretical advances to the method, and may have also used it in his earlier work in 17.

Also, by iteratively applying local quadratic approximation to the likelihood (through the Fisher information), the least-squares method may be used to fit a generalized linear model. The following discussion is mostly presented in terms of linear functions but the use of least squares is valid and practical for more general families of functions. The method of least squares can also be derived as a method of moments estimator. for normal, exponential, Poisson and binomial distributions), standardized least-squares estimates and maximum-likelihood estimates are identical. When the observations come from an exponential family with identity as its natural sufficient statistics and mild-conditions are satisfied (e.g. Polynomial least squares describes the variance in a prediction of the dependent variable as a function of the independent variable and the deviations from the fitted curve. The nonlinear problem is usually solved by iterative refinement at each iteration the system is approximated by a linear one, and thus the core calculation is similar in both cases.

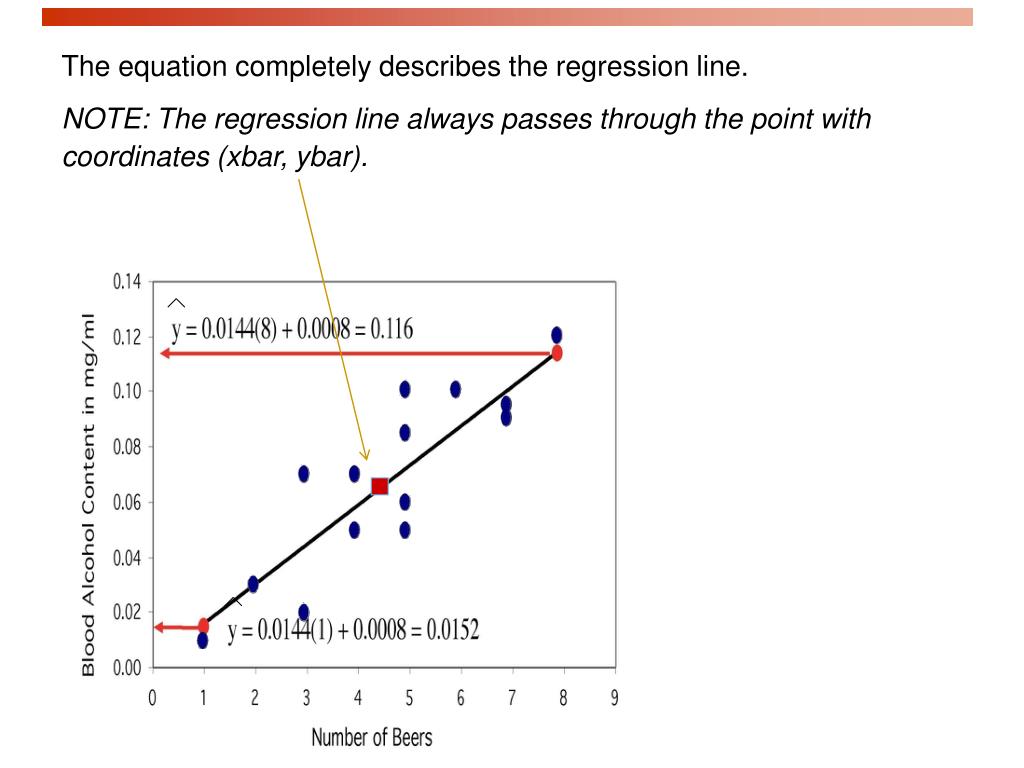

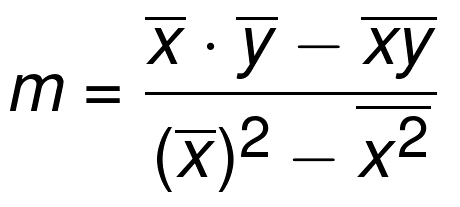

The linear least-squares problem occurs in statistical regression analysis it has a closed-form solution. Least squares problems fall into two categories: linear or ordinary least squares and nonlinear least squares, depending on whether or not the residuals are linear in all unknowns. When the problem has substantial uncertainties in the independent variable (the x variable), then simple regression and least-squares methods have problems in such cases, the methodology required for fitting errors-in-variables models may be considered instead of that for least squares. The most important application is in data fitting. The method of least squares is a parameter estimation method in regression analysis based on minimizing the sum of the squares of the residuals (a residual being the difference between an observed value and the fitted value provided by a model) made in the results of each individual equation. The result of fitting a set of data points with a quadratic function Conic fitting a set of points using least-squares approximation Not to be confused with Least-squares function approximation. Of course,in the real world, this will not generally happen."Least squares approximation" redirects here. In both these cases, all of the original data points lie on a straight line. If \(r = -1\), there is perfect negative correlation. If \(r = 1\), there is perfect positive correlation.If \(r = 0\) there is absolutely no linear relationship between \(x\) and \(y\) (no linear correlation).Values of \(r\) close to –1 or to +1 indicate a stronger linear relationship between \(x\) and \(y\). The size of the correlation \(r\) indicates the strength of the linear relationship between \(x\) and \(y\).The value of \(r\) is always between –1 and +1: –1 ≤ r ≤ 1.

If you suspect a linear relationship between \(x\) and \(y\), then \(r\) can measure how strong the linear relationship is.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed